Virtual fly. running.

NeuroMechFly v2: a biomechanical fruit-fly model, micro-CT-scanned and rigged for MuJoCo physics.

A fruit fly's brain has been mapped, neuron by neuron. Run that map on a GPU and you have a working fly mind in software. We have given it a body, and a drone for it to pilot into the physical world.

FlyWire is a neuron-level map of a fruit fly's brain, charted from a real Drosophila melanogaster. We run all 127,000 cells as a leaky integrate-and-fire network on a single GPU using Brian2 and GeNN. Sensory inputs inject current into identified neuron populations using FlyWire IDs. In the drone, descending-neuron output becomes velocity setpoints that the Crazyflie firmware turns into rotor commands. Brain state persists when the body changes: voltages, refractory windows, plasticity, hunger.

Bodies in use.

NeuroMechFly v2: a biomechanical fruit-fly model, micro-CT-scanned and rigged for MuJoCo physics.

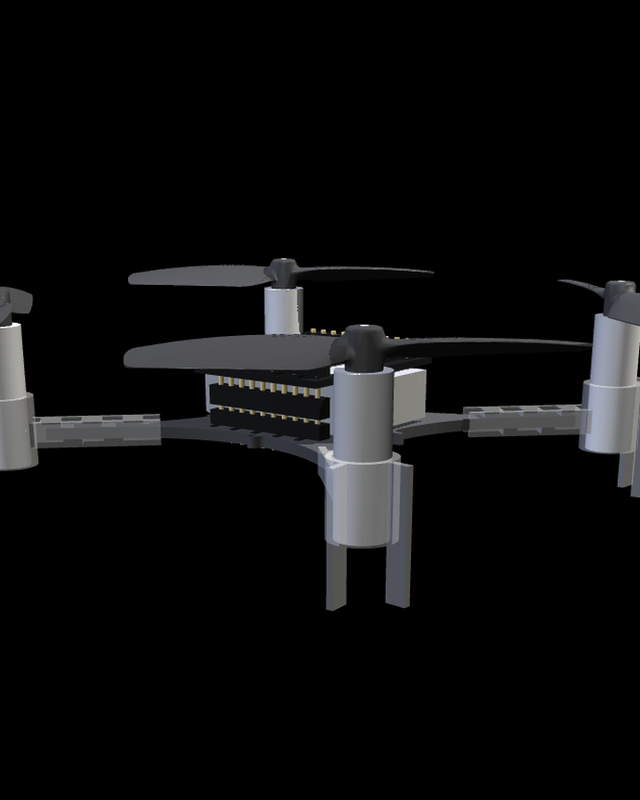

A Crazyflie 2.1 inside CrazySim's software-in-the-loop arena. The same descending-neuron output, mapped to velocity setpoints. The drone runs the unmodified Crazyflie firmware over the same transport a physical drone uses.

A physical Crazyflie 2.1 with the AI-deck streaming 320×240 grayscale frames up to the GPU. Descending-neuron output streams back as velocity setpoints. Local socket replaced by a radio link.

The work is moving outward from the simulator. Each step keeps the same brain state, plasticity, and motivation across the change.

Next. Physical Crazyflie, brain off-board. Vision over the AI-deck, descending-neuron output back as setpoints.

Further. Plasticity in the embodied loop. Synaptic learning during a closed-loop run, with the plasticity layer carrying across body changes.

Long-term. Multiple connectomes in a shared world. Several flies in one virtual space, each with independent state, plasticity, and motivation.

We are building this carefully and in the open. Subscribe for early access. Write us at dev@wunderstory.io to collaborate or see it run.